Chris S's Email & Phone Number

Staff SRE at LinkedIn

Chris S Email Addresses

Chris S's Work Experience

Yahoo!

Senior Systems Engineer - InfraOps

September 2011 to July 2012

Isilon Systems

Technical Support Engineer

July 2008 to October 2009

Oracle Corporation

System Administrator - Global DC Operations, Developer - Global It Tools

March 2007 to May 2009

Pervasive Software

Lab System Administrator

August 2006 to March 2007

Zebra Imaging

Systems Administrator

January 2006 to August 2006

Dell, Inc

Server Support Analyst

December 2003 to October 2005

Show more

Show less

Frequently Asked Questions about Chris S

What is Chris S email address?

Email Chris S at [email protected] and [email protected]. This email is the most updated Chris S's email found in 2024.

What is Chris S phone number?

Chris S phone number is 8645577300.

How to contact Chris S?

To contact Chris S send an email to [email protected] or [email protected]. If you want to call Chris S try calling on 8645577300.

What company does Chris S work for?

Chris S works for LinkedIn

What is Chris S's role at LinkedIn?

Chris S is Staff Site Reliabilty Engineer - Content

What industry does Chris S work in?

Chris S works in the Internet industry.

Chris S's Professional Skills Radar Chart

Based on our findings, Chris S is ...

What's on Chris S's mind?

Based on our findings, Chris S is ...

Chris S's Estimated Salary Range

Chris S Email Addresses

Find emails and phone numbers for 300M professionals.

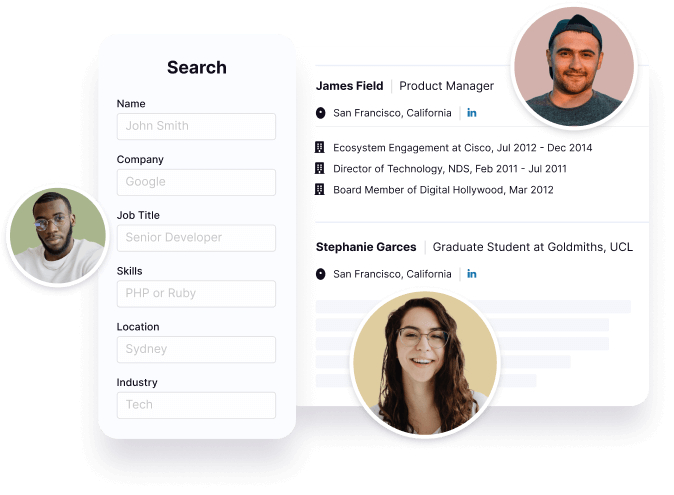

Search by name, job titles, seniority, skills, location, company name, industry, company size, revenue, and other 20+ data points to reach the right people you need. Get triple-verified contact details in one-click.In a nutshell

Chris S's Ranking

Ranked #1,027 out of 20,536 for Staff Site Reliabilty Engineer - Content in Texas

Chris S's Personality Type

Extraversion (E), Intuition (N), Feeling (F), Judging (J)

Average Tenure

2 year(s), 0 month(s)

Chris S's Willingness to Change Jobs

Unlikely

Likely

Open to opportunity?

There's 90% chance that Chris S is seeking for new opportunities

Top Searched People

American director and script writer

Actress

American actress and singer

American singer-songwriter

Swedish-American actress

Chris S's Social Media Links

/in/chrissuttles /school/accdistrict/ /company/linkedin