Madeleine Essam's Email & Phone Number

Manager Assistant & HR Manager at Tech-World

Madeleine Essam Email Addresses

Madeleine Essam Phone Numbers

Madeleine Essam's Work Experience

Servarena

HR & Administration Manager

February 2016 to April 2019

Dimofinf

HR & Administrative Assistant

April 2013 to January 2016

CTC Academy

Training Supervisor

November 2012 to March 2013

Arabian Architecture

Chairman Assistant

November 2006 to November 2009

Wadi Travel

Admin Coordinator

August 2005 to November 2006

Show more

Show less

Madeleine Essam's Education

Minia University

January 2001 to January 2005

Show more

Show less

Frequently Asked Questions about Madeleine Essam

What company does Madeleine Essam work for?

Madeleine Essam works for Servarena

What is Madeleine Essam's role at Servarena?

Madeleine Essam is HR & Administration Manager

What is Madeleine Essam's personal email address?

Madeleine Essam's personal email address is ma****[email protected]

What is Madeleine Essam's business email address?

Madeleine Essam's business email address is madeleine.essam@***.***

What is Madeleine Essam's Phone Number?

Madeleine Essam's phone (**) *** *** 191

What industry does Madeleine Essam work in?

Madeleine Essam works in the Information Technology & Services industry.

Madeleine Essam Email Addresses

Madeleine Essam Phone Numbers

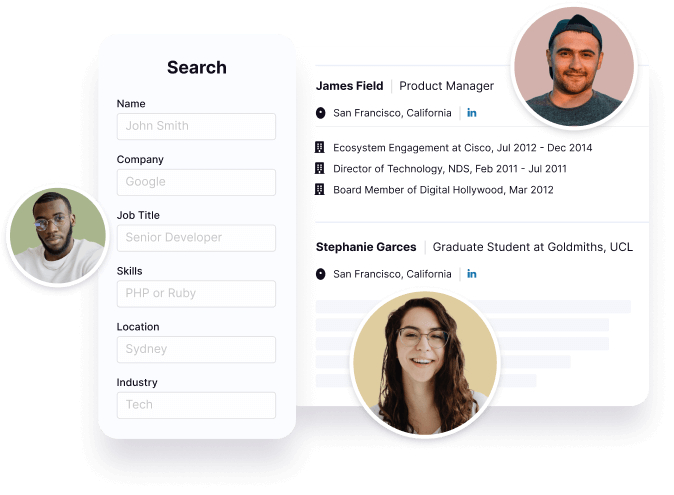

Find emails and phone numbers for 300M professionals.

Search by name, job titles, seniority, skills, location, company name, industry, company size, revenue, and other 20+ data points to reach the right people you need. Get triple-verified contact details in one-click.In a nutshell

Madeleine Essam's Personality Type

Extraversion (E), Intuition (N), Feeling (F), Judging (J)

Average Tenure

2 year(s), 0 month(s)

Madeleine Essam's Willingness to Change Jobs

Unlikely

Likely

Open to opportunity?

There's 94% chance that Madeleine Essam is seeking for new opportunities

Top Searched People

American former mixed martial artist

American data scientist and activist

Author

Canadian ice hockey defenceman

American film director

Madeleine Essam's Social Media Links

/in/madeleineessam